What Is Data Leakage in Credit Risk Models and Why Digital Footprint Data Is Different

Uncover how data leakage distorts credit models and why alternative data carries a different risk profile.

Even the most experienced credit risk teams have seen it happen: a model looks strong in testing, performs well in validation, and then disappoints in production.

In my experience, there are several reasons this gap appears in credit score modeling. One of the most common is data leakage.

In this article, I’ll explain what data leakage is, why it happens, and why traditional and alternative data sources carry different leakage risks.

What is data leakage in credit risk assessment?

Data leakage occurs when a credit model learns from information that would not exist at decision time.

This often includes signals like:

- future delinquencies

- charge-offs

- collections activity

- post-approval account status updates

Leakage usually appears in subtle ways. For example, through updated bureau fields or internal flags added later in the loan lifecycle.

When this happens, model performance looks stronger than it truly is. Accuracy appears high in training and validation, then drops in production.

The model isn’t really predicting risk. Instead, it’s suffering from target leakage. It's partly “remembering” outcomes because the training features are correlated with the target in a way that won’t exist in production.

This is usually accidental. Teams work with large historical datasets, join multiple sources, and build aggregated features.

Without strict time controls and data leakage protection solutions, post-decision information can quietly slip into training data.

The result is predictable: credit decisions become less reliable, losses rise unexpectedly, and confidence in the model erodes.

How data leakage happens in credit risk models

I’ve worked with disciplined credit risk teams who follow rigorous validation processes, but still encounter leakage. The reason is rarely intent. It’s complexity.

Below are the three failure points I see most often.

Failure #1: Temporal misalignment in complex data environments

Credit risk models rarely rely on a single dataset. In practice, they combine:

- layered historical records collected over long periods

- signals pulled from multiple systems

- sources with different update logic and timestamp conventions

In that environment, it becomes easy to lose track of when a signal was created relative to the true decision point.

Ensuring Point-in-Time (PIT) consistency is often the weakest link. Without a rigorous temporal cutoff, features can drift from their state at the moment of application to their state during the repayment period.

Even well-structured validation setups can unintentionally mix older and newer records. That way, it allows the model to learn from information that would not have existed at approval.

Failure #2: Post-approval signals hidden inside “safe” aggregates

In my experience, leakage rarely comes from obviously “future” variables. It usually hides inside reasonable-looking aggregates.

Average balances, repayment patterns, and account activity can look perfectly valid on the surface. But if they include signals shaped after approval, they quietly inflate model performance.

Without strict documentation of feature timing and a clearly defined decision cutoff, tracing these issues becomes difficult.

And under pressure to demonstrate improvements, timing assumptions can slip through validation unnoticed.

This is especially true for bureau and lifecycle-driven credit data. Because it evolves throughout the loan lifecycle, even small reporting misalignments can introduce hidden leakage.

This is not an argument against historical validation. It’s an argument for being precise about the decision point and the data type.

Failure #3: The validation paradox: avoiding historical data to “stay safe”

Here is the paradox I see most often.

Proper model validation requires historical data with known outcomes. In fact, it is often the fastest and most reliable path to verification. For example, our team usually finishes it in 1-2 weeks.

At the same time, credit risk teams are under pressure to eliminate any possibility of leakage.

Out of caution, some avoid historical datasets altogether and validate only on fresh applications without outcomes. It feels safer. In reality, it slows everything down.

Validation cycles stretch for months while outcomes mature. During that time, features evolve and pipelines get adjusted.

By the time results arrive, it becomes harder to isolate what actually drove performance.

Historical data is not the problem. Uncontrolled timing is.

When the decision point is clearly defined and features are strictly limited to what existed at that moment, historical validation becomes an efficient tool. And this is where the data type matters.

Traditional credit data sources and leakage risk

In this section, I’ll break down the usual credit data sources and explain where leakage risk typically appears within each of them.

Credit bureau data

Scores, repayment history, account status, and credit limits continue to change long after a loan is approved.

If time cutoffs are not precisely enforced, later updates can unintentionally slip into training data.

Because bureau information reflects lifecycle developments, even small reporting misalignments can introduce signals that were not available at the true decision point.

Transaction data

Transaction data creates similar challenges.

Balances, inflows and outflows, and spending patterns continue updating after approval.

Without strict temporal cutoffs, future activity may be treated as if it were known at the moment of decision.

Since cash flow behavior is often strongly correlated with default outcomes, even subtle leakage in transaction aggregates can materially inflate model performance.

Internal lender data

Internal lender data often spans the longest timelines and carries the highest hidden risk.

Delinquency records, collection actions, restructuring decisions, manual reviews, and fraud investigation outcomes may sit alongside application data in the same systems.

When controls are loose, pre- and post-decision signals can easily mix.

Many of these variables are shaped by internal operational processes. That is why they may embed outcome-related information in ways that are difficult to trace.

Why this becomes hard to detect

Common lender data sources often include:

- credit bureau repayment history

- internal delinquency and collections data

- bank transaction behavior after loan approval

- manual review and fraud investigation flags

Many of these variables are:

- continuously updated

- shaped by internal operational processes

- tightly correlated with future default events

Without strict time controls, leakage becomes easy to introduce and difficult to detect.

That said, leakage is not inevitable. Some data types are naturally easier to validate safely. Digital footprint data is one of them.

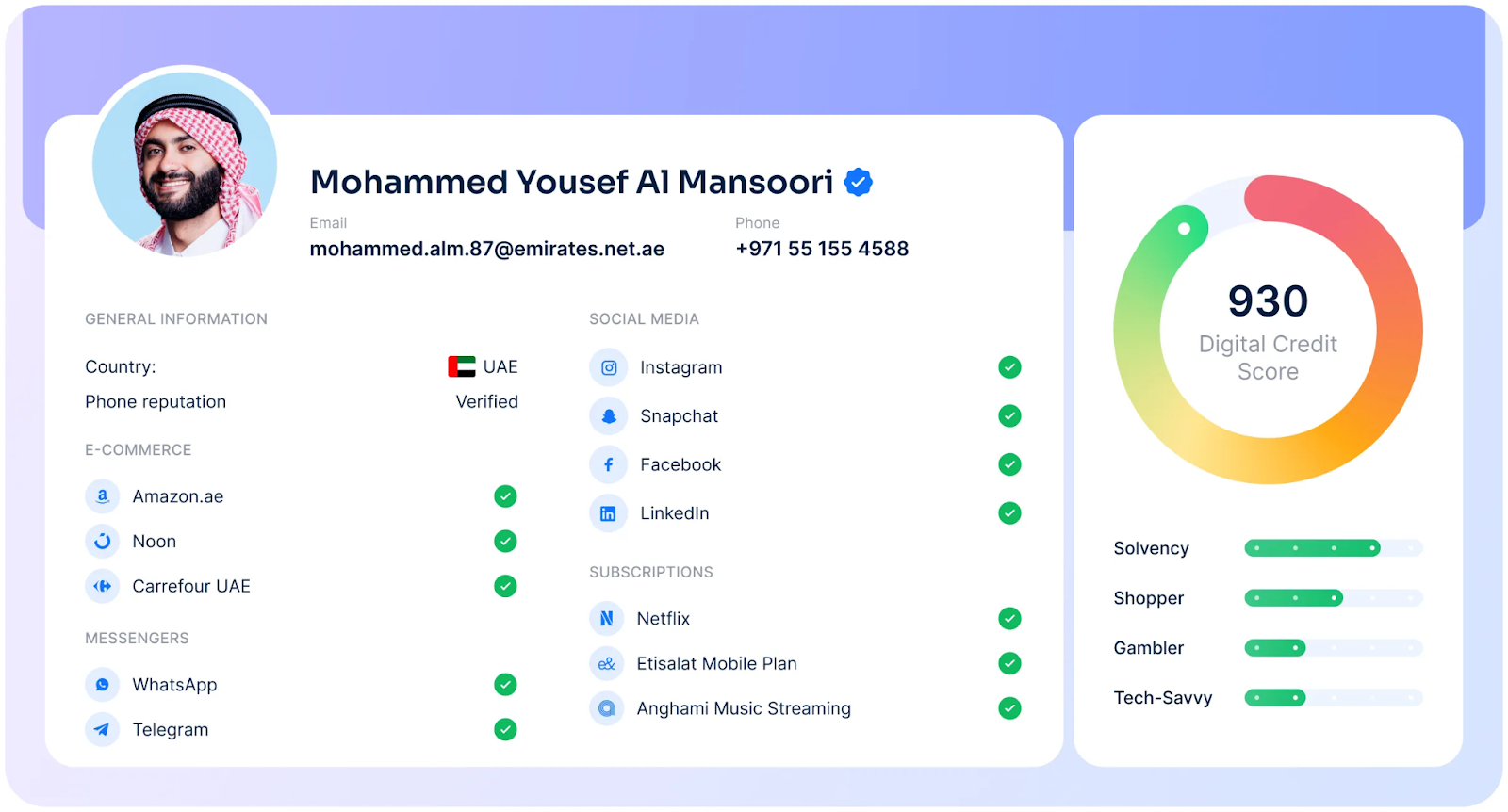

Why digital footprint data carries a different data leakage risk

Digital footprint data is usually captured as a snapshot at the moment someone applies for a loan.

Unlike bureau data, it does not accumulate through the repayment lifecycle. This reduces the risk of pulling in post-decision updates.

As a result, digital footprint data often carries a lower leakage risk. It signals identity stability and behavioral patterns, not repayment events that evolve after approval.

This distinction is part of what is alternative credit scoring: using digital footprint data that is fundamentally different in structure and timing from traditional bureau records.

1. Independent from the loan lifecycle

Digital identifiers exist independently of any loan.

Common examples include:

- email addresses

- phone numbers

- devices

- IP addresses

These identifiers are already present before an application is submitted. They do not depend on repayment behavior or loan performance.

2. Snapshot-based, not outcome-based

Digital footprint data answers questions such as:

- How long has this email existed?

- How often has this phone number appeared historically?

- Has this identifier been seen across multiple applications?

These signals describe stability and usage patterns. They do not update because a borrower defaults or enters collections.

3. Accurate at a point in time, but hard to reconstruct

In practice, it is not always possible to reconstruct exact historical snapshots of digital presence.

For example, there is no reliable way to confirm whether an email existed on a specific platform on a specific historical date.

As a result, digital footprint data used in proofs of concept often reflects the current state of the digital ecosystem.

The data is factually correct at the time of modeling, but it requires careful interpretation during validation.

4. Lower leakage risk, not zero leakage

Digital footprint data is separate from repayment and servicing processes, so leakage behaves differently.

It does not:

- encode future defaults

- include post-loan operational actions

- reflect collections or recovery activity

This difference matters during validation.

For example, alternative credit scoring companies like RiskSeal are typically evaluated using historical applications.

Even when these signals are retrieved later for validation, they describe attributes that existed independently of the loan decision.

Understanding this distinction is central to effective data leakage prevention. It helps teams separate real leakage risks from perceived ones without slowing learning cycles.

How RiskSeal approaches data leakage protection

Concerns about data leakage often come up when teams evaluate real-time digital footprint analysis. That caution is reasonable. Validating new signals in credit risk is rarely simple.

At RiskSeal, we work with credit risk teams on this problem every day.

In practice, leakage risk is best managed through clear data boundaries and pragmatic validation. Not by avoiding historical data.

Our proof-of-concept process reflects that approach. Teams share historical loan applications, RiskSeal scores them, and results are compared against known outcomes.

There are no model changes, no system integrations, and no black-box decisions. All analysis remains fully on the lender’s side.

This setup allows teams to test alternative signals quickly and safely:

- use existing historical data

- run side-by-side comparisons

- evaluate results using their own metrics and controls

In most cases, this type of validation can be completed in one to two weeks.

The key distinction is understanding how different data sources behave. Traditional credit data is tied to the loan lifecycle and requires extra caution around timing.

Digital footprint signals exist before an application is reviewed and are not derived from repayment or collections activity.

Recognizing this difference helps teams separate real leakage risk from perceived one and move faster with confidence.

Key takeaways for choosing a data leakage prevention solution

Data leakage is not a modeling problem. It’s a data discipline problem.

To prevent it, I focus on three things:

- define one decision point and use it consistently

- enforce strict time cutoffs across every data source

- audit every feature for post-decision contamination

Used correctly, digital footprint data helps teams validate faster without relying on variables that evolve after approval.

My final advice is simple: treat time as a feature and control it as aggressively as you control model performance.

The 2026 guide to LATAM digital footprints for credit scoring

Inside the LATAM alternative credit data report

FAQ

What is data leakage prevention?

Data leakage prevention is the process of ensuring that a model only learns from information that was available at the actual decision point.

In credit risk modeling, this means enforcing strict time boundaries so that no post-approval or outcome-based data enters training or validation.

What are data leakage prevention best practices?

The core best practices are:

- Define one clear decision point and use it consistently

- Enforce strict time cutoffs across all datasets

- Audit every feature to confirm it existed at decision time

- Separate pre-decision data from repayment and servicing data

- Validate models using controlled historical datasets

Leakage prevention is primarily about data discipline, not model complexity.

Why does data leakage make credit models look better than they really are?

Leakage introduces information about outcomes into the training data.

When a model learns from signals that reflect future defaults, collections, or repayment behavior, its performance appears artificially strong in validation.

In production, that information does not exist, so performance drops.

Why is it difficult to spot data leakage when testing a model?

Leakage is often subtle.

It rarely comes from obvious “future” variables. Instead, it hides inside aggregated features, updated bureau fields, or internal flags that were added after approval.

Because these variables look legitimate, they can pass validation unless timing is carefully audited.

How does improper time handling lead to hidden leakage?

Improper time handling allows data created after the decision point to be included in model training.

For example, if repayment updates, transaction activity, or internal review flags are not filtered by the exact approval date, the model may learn from information that was not available when the loan was granted.

Can a model show great validation results even if it has data leakage?

Yes.

In fact, models with leakage often show excellent validation metrics because they are partially learning from outcome-related signals.

The issue only becomes visible later, when production performance fails to match expectations.

What types of data most often cause leakage in credit risk models?

Data that evolves after loan approval carries the highest leakage risk. This includes:

- Credit bureau updates

- Transaction data that continues after approval

- Internal delinquency and collections records

- Manual review and fraud investigation flags

Any data tied to the loan lifecycle requires strict time controls.

How can credit teams compare leakage risk across different data sources?

Teams should evaluate:

- Whether the data exists independently of the loan lifecycle

- Whether it continues to update after approval

- How tightly it is correlated with default outcomes

- How easily it can be anchored to a clear decision timestamp

Data that is snapshot-based and independent from repayment processes generally carries lower leakage risk than data that evolves throughout the loan lifecycle.

See more

Explore how digital footprint analysis improves credit scoring models, boosting default prediction accuracy and approval rates in emerging markets.

Discover why Research.com ranks RiskSeal’s digital scoring platform among the top accounts receivable solutions for lenders.

Learn why ISO/IEC 27001:2022 certification matters for financial companies and explore the steps RiskSeal took to achieve it.

.svg)

.webp)

.webp)