How to Increase Lending ROI with Alternative Data Signals

Discover how disciplined testing protects capital while improving portfolio performance.

Credit risk teams work within tight regulatory and capital limits.

Regulation, capital requirements, and audit exposure shape every modeling decision. And ultimately influence how lenders make money.

I understand that caution is rational. But borrower behavior evolves faster than reporting infrastructures adapt. The real question is how to test new signals without adding risk.

Why standing still quietly increases portfolio risk

Risk compounds quietly when models rely on signals that no longer fully describe the market.

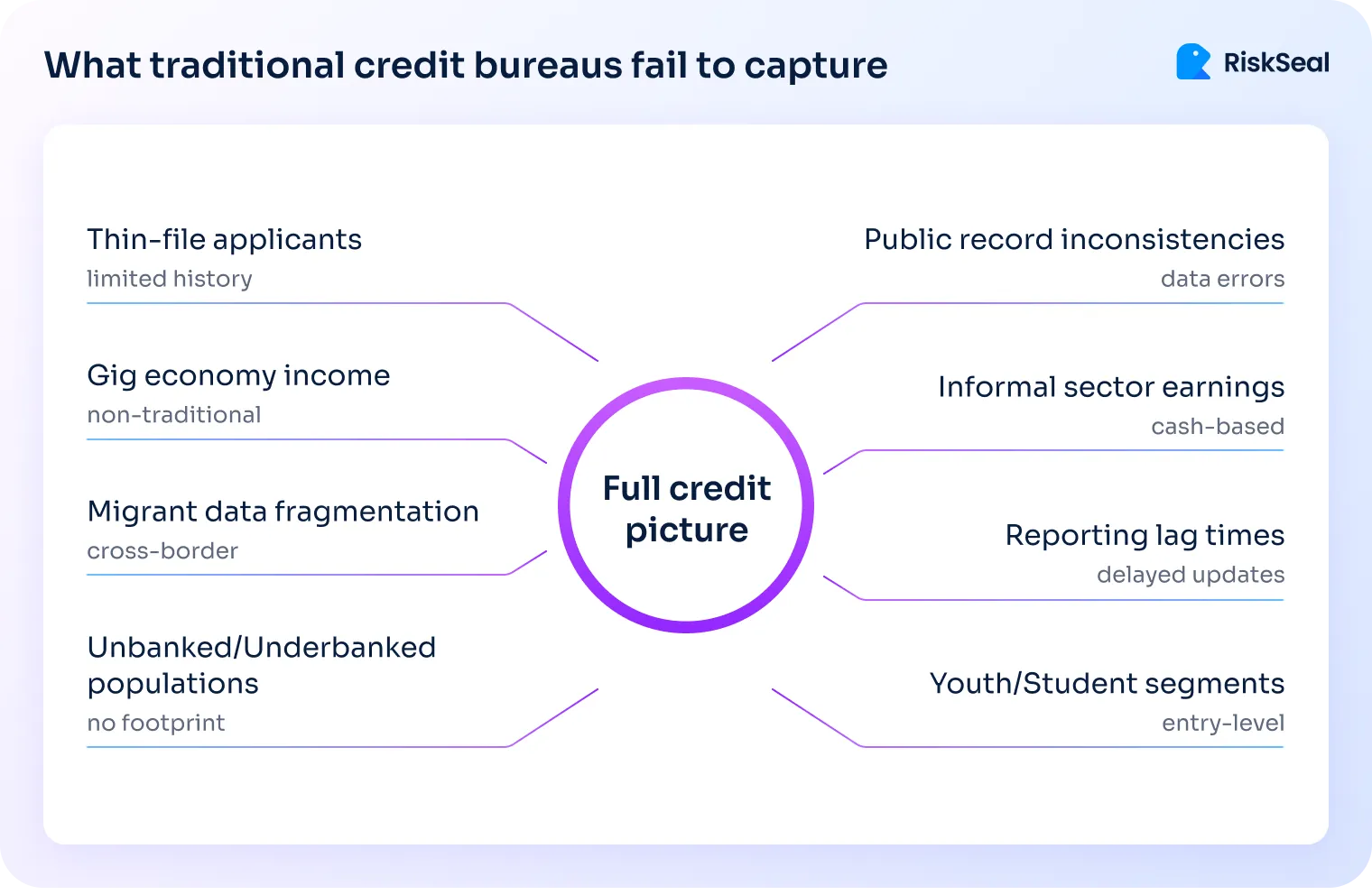

Bureau coverage gaps are expanding faster than you think

Traditional credit bureaus were built for stable employment and long credit histories. They work best when borrowers interact consistently with formal financial products.

Many portfolios no longer fit that pattern. Gig workers, migrants, and younger borrowers often generate thin bureau files.

In emerging markets, penetration remains uneven across regions. Segmentation gaps persist. This reflects how income, work, and financial identity have changed.

A vivid example comes from our recent work with a Philippine BNPL provider.

In that portfolio, more than 70% of applicants had thin or no credit history. Bureau data left large gray areas in decisioning.

At the same time, fraud patterns evolved. Synthetic identities and repeat defaulters re-entered the system. Traditional checks provided limited early warning.

When borrower composition shifts, signal coverage shifts with it. Risk models built on static infrastructure do not fail overnight. They degrade gradually and blind spots widen.

Why leading lenders are diversifying their risk signals

Across digital banks, BNPL platforms, and microfinance institutions, a clear pattern is emerging: bureau data is no longer used alone.

What is driving the shift?

- Thin-file and no-file applicant growth within existing portfolios

- Measurable segmentation gaps in bureau-only models

- Pressure to reduce manual reviews while maintaining speed

- The need for stronger separation in mid-risk segments

What risk teams are observing in validation tests:

- Improved differentiation among thin-file applicants

- Clearer distinction between “no history” and “no stability”

- Controlled adoption of AI in risk management within established frameworks

- Faster automated approvals without proportional risk expansion

- Reduced reliance on manual escalation

From my perspective, diversification strengthens established decision frameworks by improving visibility where bureau data is structurally limited.

The statistical decay built into every static risk model

All predictive models degrade as environments evolve. This is a mathematical property, not a philosophical critique. Repayment dynamics shift all the time across macroeconomic cycles.

Even without coverage gaps, static feature sets lose predictive power. Rank ordering of risk gradually becomes less precise.

In large portfolios, small improvements matter disproportionately. A one to two percent Gini uplift can materially affect expected loss.

The point is not that existing models fail. The point is that signal refreshing sustains portfolio efficiency over time.

Governance complexity is not the same as risk

Regulated lending environments reward consistency and documented controls. That structure protects institutions from unnecessary volatility.

However, disciplined testing does not undermine stability. It applies the same validation logic used in any model recalibration.

Institutional hesitation often looks like resistance. In practice, it is usually governance complexity.

Testing new data does not replace core infrastructure. It measures incremental value under controlled conditions.

How to test new alternative signals without increasing exposure

The difference between experimentation and exposure lies in process design.

What a structured proof of concept actually involves

As an alternative data vendor, our team has conducted multiple proof-of-concept validations across different environments. We’ve found that effective testing follows a repeatable sequence.

1. Use historical decision data

The dataset should reflect real underwriting decisions and known outcomes. This ensures evaluation mirrors actual portfolio behavior.

2. Maintain full data control

The lender manages anonymization and aggregation. No live decision flow changes during validation.

3. Score in parallel

Applications are evaluated using the alternative data API and benchmarked against current model performance.

4. Define a limited objective

The question is specific: does the additional signal improve predictive separation? Nothing beyond incremental predictive value is assessed at this stage.

5. Measure statistically relevant improvements

Teams typically assess the Gini metric. They also examine default separation within critical score bands.

This structure mirrors established model validation practice. It generates actionable insight without introducing production risk.

Why structured testing reduces regulatory and operational risk

Structured testing isolates experimentation from live operations. No approvals, declines, or limits are affected during evaluation.

There is no need to retrain production models immediately. Integration discussions occur only after validation confirms value.

This separation matters for auditability. It preserves clear documentation of decision logic during testing. It also aligns with established model governance standards.

In other words, testing becomes analysis rather than deployment.

What to evaluate beyond headline uplift

Headline improvements rarely tell the full story. The composition of uplift matters more than magnitude.

When assessing incremental signals, I advise teams to focus on:

- Independence from bureaus. Check correlation with existing features. Real value appears when new signals add independent separation.

- Segment-level impact. Thin-file and near-prime segments often reveal the clearest differentiation effects.

- Mid-risk sensitivity. Small separation improvements in mid-risk bands can materially influence portfolio performance.

- Temporal stability. Validate performance across multiple time windows. Signals that deteriorate under minor shifts rarely justify scaling.

The objective is durable enhancement, not temporary optimization. Signals must prove resilient within a live neobank loan management system, not only in backtesting environments.

Red flags that undermine responsible vendor evaluation

Certain commercial practices increase unnecessary risk. Long-term commitments before validation are one example.

Opaque data sourcing is another. Risk teams should understand what features influence scoring outputs.

Independent replication should always be possible. Black-box improvements without transparency weaken governance confidence.

Heavy implementation requirements during early testing also raise concerns. A proof of concept should not demand architectural overhaul.

Vendors earn trust through evidence, not urgency.

Measure financial impact before scaling

Validation is only meaningful when it translates into balance sheet impact.

Translate technical uplift into portfolio economics

Predictive metrics must convert into financial outcomes. Otherwise, improvements remain abstract.

When translating uplift, focus on:

- Default rate impact. Map performance improvements to default rate reductions in key segments. Then translate that into expected loss and capital impact.

- Approval rate discipline. Higher approvals are valuable only if performance remains stable. Volume without quality erodes economics.

- Fraud loss reduction. Digital identity inconsistencies often signal early-stage exposure. Quantify prevented fraud losses explicitly.

- Operational efficiency. Reduced manual review lowers underwriting cost per funded loan.

When metrics connect directly to balance sheet impact, internal alignment improves significantly.

Build a credit business ROI model that withstands scrutiny

An effective framework should remain simple and auditable. Complex projections often slow decision-making unnecessarily.

A structured ROI model typically includes:

- Cost assumptions. Include per-check pricing and projected volume under realistic scenarios.

- Conservative performance estimates. Quantify financial benefits using restrained uplift assumptions. Avoid optimistic bias in early projections.

- Break-even threshold. Calculate how many prevented defaults or fraud losses justify implementation cost.

- Return timeline. Define when impact should materialize. Boards and finance teams value temporal clarity.

This approach transforms experimentation into structured capital allocation.

Scale gradually under monitored conditions

Even validated signals require careful rollout.

Controlled scaling preserves credibility and protects performance stability.

Discontinue what fails to justify itself

Not every data source produces durable uplift. That outcome should not be treated as failure.

Testing exists to filter noise from value. Scaling should remain reserved for evidence-backed improvements.

If financial impact fails to materialize, discontinue responsibly. Capital discipline sustains long-term experimentation capacity.

This mindset keeps innovation compatible with governance rigor.

Innovation and credit risk model testing can coexist

Risk management and innovation are not opposing forces. They operate effectively when connected through carefully planned validation.

Traditional bureau data remains foundational across most lending portfolios. However, evolving borrower behavior demands periodic reinforcement of core models.

In my experience, the safest path forward is structured experimentation. Test methodically, measure rigorously, and scale only what proves durable.

In regulated credit environments, that approach is not aggressive. It is responsible stewardship of both capital and confidence.

The 2026 guide to LATAM digital footprints for credit scoring

Inside the LATAM alternative credit data report

FAQ

What is credit risk model testing, and why is it critical for lenders today?

Credit risk model testing is the structured evaluation of whether new signals or features improve predictive performance without increasing operational or regulatory exposure.

It typically involves backtesting on historical application data and measuring statistical uplift before any production changes occur.

It is critical today because borrower behavior evolves faster than traditional reporting systems. Static models degrade gradually. Without disciplined testing, portfolios accumulate blind spots. Structured validation allows lenders to refresh signals while preserving governance stability.

What does ROI mean in credit decisioning?

In credit decisioning, ROI refers to the measurable financial impact of improved risk assessment. It is not just statistical lift. It is the translation of predictive improvements into lower expected loss, better approval quality, reduced fraud, or lower operational cost.

ROI in this context connects model performance to balance sheet outcomes. If uplift does not affect capital efficiency, loss rates, or cost structure, it remains analytical rather than economic.

How can lenders calculate credit business ROI?

Lenders calculate credit business ROI by linking model uplift to portfolio economics.

Start by mapping predictive improvements to changes in default rates within key segments. Translate that into expected loss reduction and capital impact.

Include fraud loss prevention and operational efficiency gains where relevant. Then compare total financial benefit against implementation and data costs under conservative assumptions.

A structured ROI model should also define break-even thresholds and time-to-return to support capital allocation decisions.

How can lenders test alternative data without full integration into their loan management system?

Alternative data can be tested in parallel using historical applications and known outcomes. Applications are scored externally via API, and results are benchmarked against current model performance.

No live decision flow changes during this phase. Approvals, limits, and declines remain unaffected.

This controlled proof of concept isolates experimentation from production systems. It allows lenders to measure incremental predictive value before considering any integration into the loan management system.

What is considered a meaningful Gini improvement in credit scoring models?

In large portfolios, even a one to two percent Gini improvement can be meaningful. The impact depends on where the uplift occurs.

Improvements concentrated in mid-risk or thin-file segments often create disproportionate economic value.

Headline magnitude alone is insufficient. Lenders should assess whether uplift adds independent separation, remains stable across time windows, and translates into measurable default rate reduction in critical score bands.

How does a small Gini uplift translate into real financial impact at scale?

A small Gini uplift improves rank ordering precision. That affects how risk is distributed across approval bands.

In high-volume portfolios, marginal separation improvements can reduce defaults in key segments or enable safer approval expansion.

When applied across thousands or millions of accounts, even minor percentage shifts can materially affect expected loss and capital allocation. Scale amplifies incremental precision.

What metrics should lenders use when evaluating an alternative data vendor?

Lenders should evaluate statistical performance and structural robustness. Core metrics typically include Gini or KS improvement, as well as default separation within relevant score bands.

Correlation analysis with existing bureau variables is essential to confirm independent value. Segment-level performance, temporal stability, and consistency across economic cycles should also be examined. Evaluation should prioritize durable enhancement over short-term uplift.

What are the biggest red flags when choosing an alternative data provider?

Long-term commercial commitments before validation are a major concern. Testing should precede contractual lock-in.

Opaque data sourcing or black-box methodologies weaken governance confidence. Independent replication must be possible.

Heavy implementation requirements during early testing also signal misalignment. A responsible proof of concept should not require an architectural overhaul. Vendors earn trust through measurable evidence, not urgency or complexity.

See more

Discover why Research.com ranks RiskSeal’s digital scoring platform among the top accounts receivable solutions for lenders.

Explore data enrichment with this hands-on guide. Learn onboarding best practices, avoid common pitfalls, and see real use cases.

Discover how credit institutions can ensure GDPR compliance when using alternative data for credit scoring.

.svg)

.webp)